We are releasing today the final report of the Public Interest Corpus project. A stable, citable version is here: The Public Interest Corpus: A Framework for Implementation. Because we want to encourage additional feedback, we’re also sharing a version here that allows you to comment.

The report is the product of more than a year of work supported by the Mellon Foundation, in which we asked how research libraries can make books data available for AI training and computational research in ways that serve the public interest, rather than reinforcing the existing concentration of access to texts among a small number of well-resourced commercial actors.

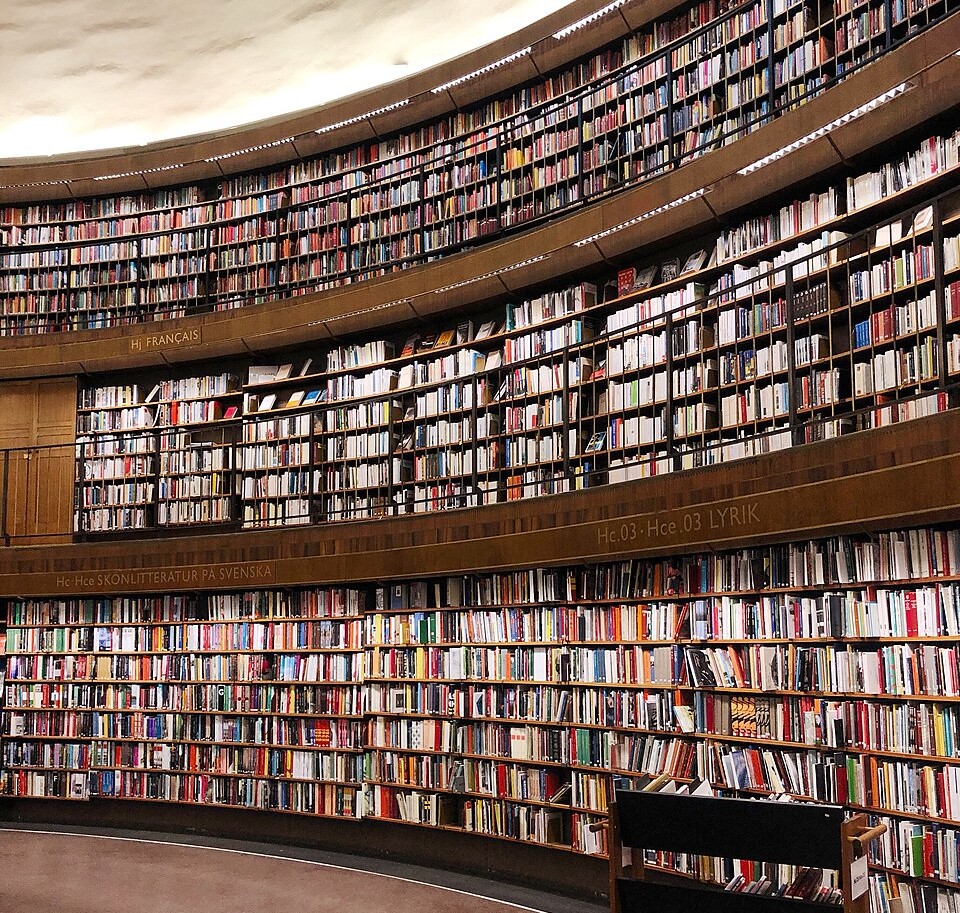

We’ve talked about the starting point of this project before: books are now widely recognized as among the highest-quality training data available for AI systems—they reflect sustained editorial processes and capture historically deep records of human thought across many disciplines and languages. Research libraries hold them at scale, often already in digital form. But access to that data for AI development has so far been shaped largely by which commercial actors have the resources to license, scrape, or otherwise acquire it. Academic researchers and smaller public interest organizations have been mostly outside that conversation.

The project was designed to ask whether libraries could change that, organized around their public interest mission and operating within the legal frameworks that already govern their work. Over the course of 2025, we engaged with researchers, librarians, authors, publishers, and technologists through workshops at Northeastern, NYU, and the University of California Office of the President, and through presentations at CNI, the Charleston Conference, AI4Libraries, and other venues. The report distills what we learned about services, governance, legal strategy, and sustainability. A few key takeaways:

- Stakeholders consistently asked for a service oriented toward access for academic and nonprofit researchers, with programmatic bulk access for computational work and discovery-oriented access for students and members of the public.

- Rather than build something new from scratch, the report recommends partnering with an existing academy-owned research service—HathiTrust, for example, is a natural candidate given its mission alignment, infrastructure, and established collaborative relationships across the research library community.

- Coordination of sourcing across institutions, e.g., on engagement with commercial digitization partners, should sit within that framework, drawing on lessons from earlier work such as under the Google Books project, where terms have sometimes constrained downstream library service development more than necessary.

Throughout the project we gave special attention to legal issues, particularly in light of the large number of AI copyright suits pending. From cases like AV ex rel. Vanderhye v. iParadigms (2009) (plagiarism detection tool) through Authors Guild v. HathiTrust (2014) and Authors Guild v. Google (2015) courts have recognized non-expressive computational uses of texts as transformative fair use, with text and data mining specifically identified as the kind of research application fair use is meant to enable. The 2025 decisions in Bartz v. Anthropic and Kadrey v. Meta extend that foundation into the AI training context. Bartz in particular reinforced and extended what the Google Books court concluded about the legality of converting lawfully held print copies into digital form. Those cases paint an encouraging picture for fair use as applied to AI training, but Thomson Reuters v. ROSS Intelligence (2025) is an important counterpoint, concluding that copying for AI training was not fair use in a case focused on direct commercial substitution of competing legal research tools. But even there, the focus was tightly constrained to competing commercial products. Our recommendation is that the Public Interest Corpus, focused on non-commercial research and scholarly uses, operate squarely within this established line of cases, while exercising additional care where specific features of a use—e.g., contractual constraints from prior digitization agreements, downstream commercial applications, verification of independent researchers—warrant heightened attention.

This report closes the planning phase. What comes next is implementation, which would include identifying the right institutional home and sustaining engagement with authors, publishers, and researchers about how this resource should evolve. None of this is novel work for libraries. What is novel is the scale and the moment, and the opportunity to ensure that the next generation of AI tools is built on a foundation that reflects a focus on supporting research, scholarship and learning. To do all of this requires resources, and we’re currently working to secure funding to get this started (stay tuned for more in the coming months).

We are grateful to the Mellon Foundation for supporting this work, and to the many advisory board members, workshop participants, and interview subjects who shaped the report along the way. Personally, I am grateful to my co-PI Dan Cohen at Northeastern, to Thomas Padilla (now at the University of Nebraska-Lincoln) and Giulia Taurino for their great work on this project. You can visit the project website at publicinterestcorpus.org.

Discover more from Authors Alliance

Subscribe to get the latest posts sent to your email.